UI / UX Design

Brainly AI-Powered Test Prep

Executed a data-driven design strategy by combining qualitative interviews, quantitative surveys, and competitive audits to build a zero-input, adaptive testing engine that reduced prep time by 40%.

Year :

2025

Industry :

EdTech

Employer :

Brainly

Project Duration :

8 weeks

Overview

Students shouldn't have to study how to study.

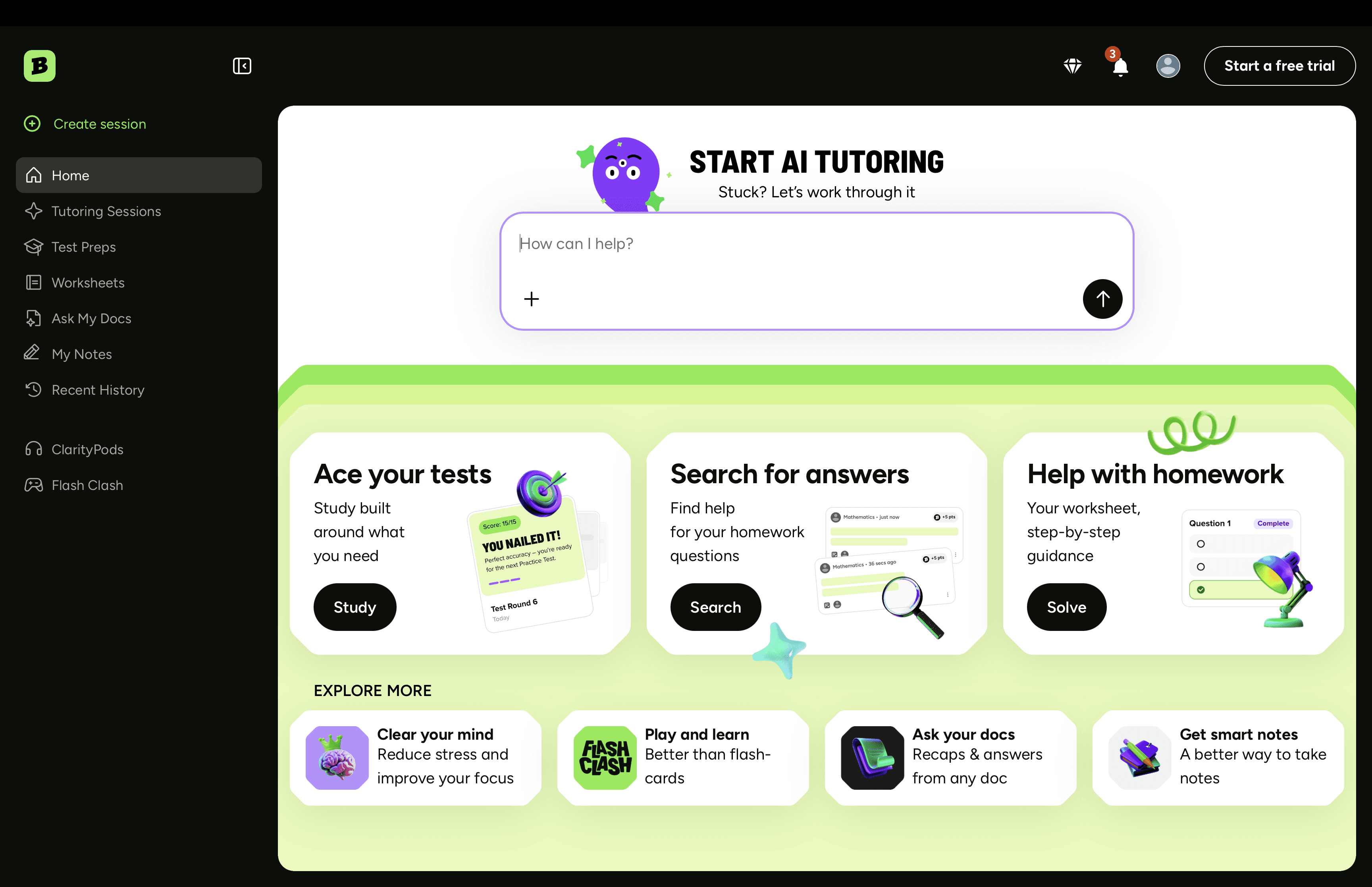

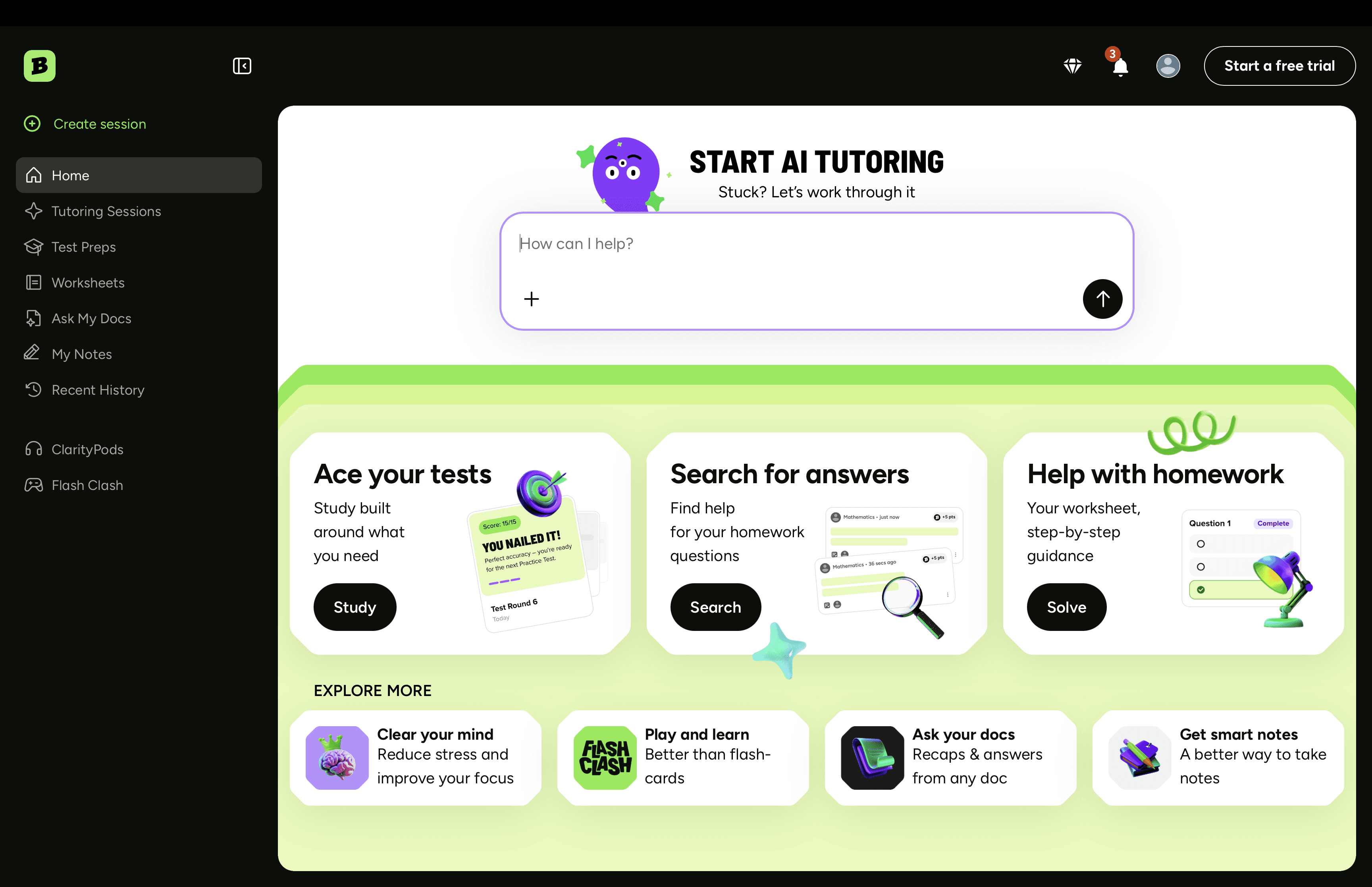

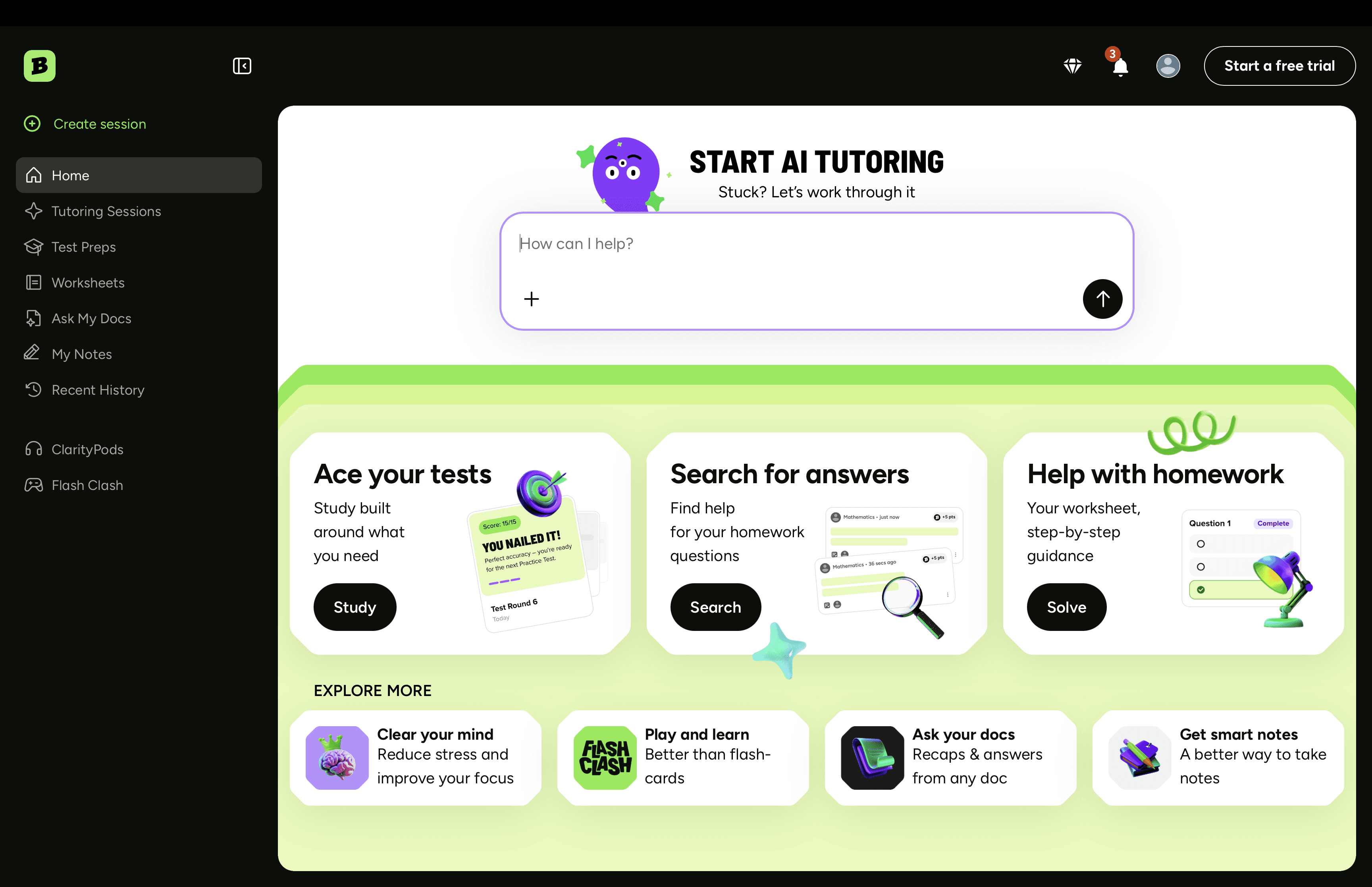

I led design end-to-end on Brainly Test Prep, an AI feature that turns the homework students already do into a personalized, adaptive study plan. No flashcards to build. No "where do I even start" panic the night before an exam.

First 60 days post-launch:

+30% weekly engagement vs. control

−40% self-reported study setup time

4.5 / 5 average CSAT

(Brainly serves 15M+ MAU, so small UX wins compound fast.)

The problem

Brainly's own research told the story: 28% of US students name exams as their #1 source of school anxiety, and 68% admit they don't give themselves enough time to study. The gap wasn't motivation. It was knowing where to start.

Tools like Quizlet and StudySmarter only made it worse. They assume students will sit down, organize materials, and build their own study set. Cognitive load on top of cognitive load.

Our hypothesis: if we removed the setup work entirely and met students where their materials already lived (Brainly already had their homework and Q&A history), we'd turn studying from a planning problem into a doing problem.

Goals & KPIs

Three things we committed to before any pixels got pushed:

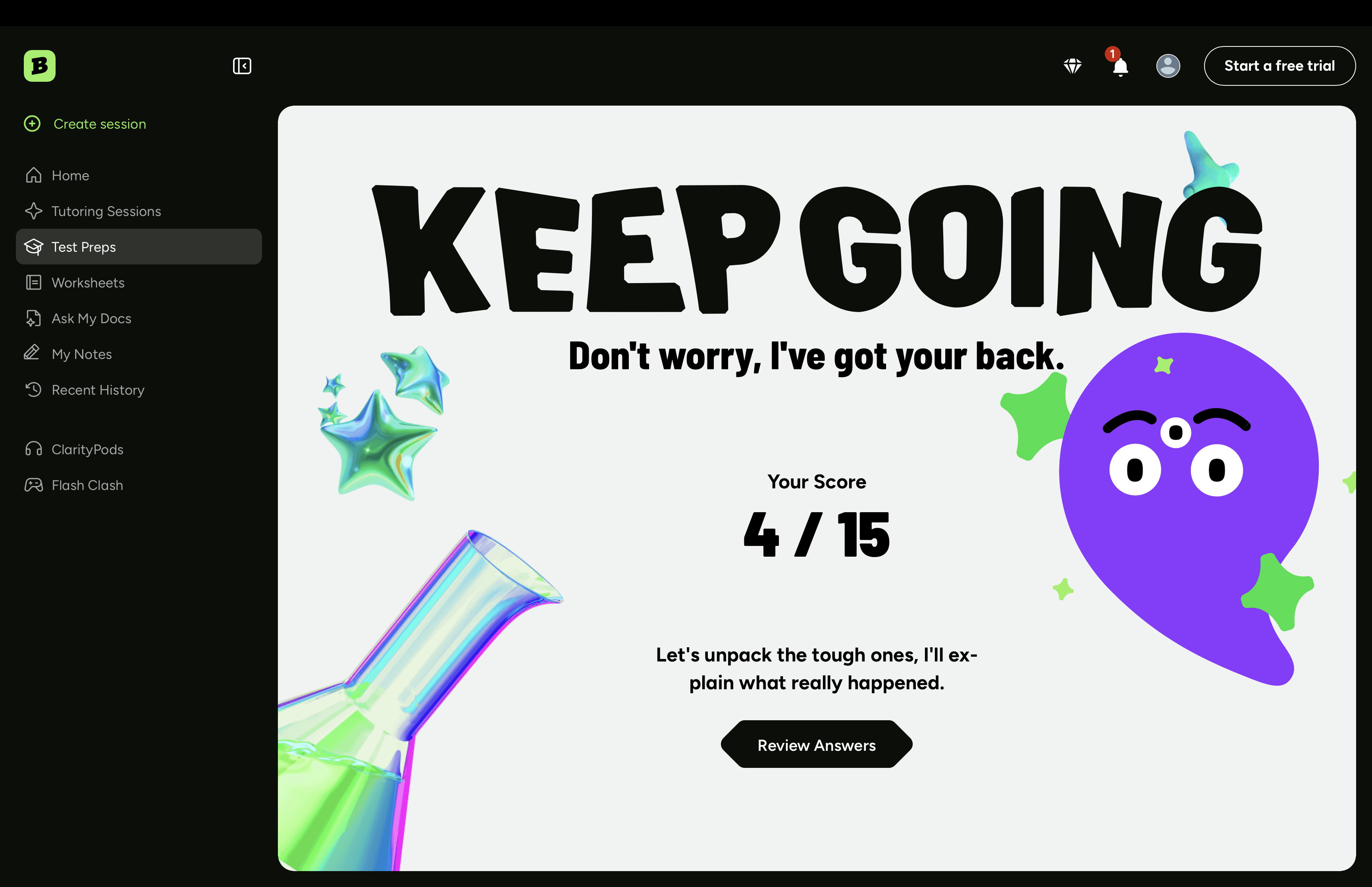

Engagement: +20% weekly returns from Test Prep users vs. non-users

Time-to-first-quiz: under 60 seconds from upload

Satisfaction: ≥ 4.0 CSAT in the first month

Research

20 interviews (ages 13–19) and 500+ surveys. The qual surfaced an emotional layer I hadn't expected — students were carrying expectations from parents, peers, and teachers, and the planning of study time was where it all collapsed. Multiple kids said some version of "I open my notebook and just stare at it."

The quant confirmed it: 80% preferred automation over building their own flashcards, 60% felt overwhelmed during exam season, and 55% specifically asked for daily reminders.

Students didn't want more features. They wanted something to tell them what to do next.

Competitive landscape

A full audit showed a clear white space: nobody had nailed zero-input ingestion plus mistake-driven adaptive review.

Capability | Brainly | Quizlet | StudySmarter | Khan |

|---|---|---|---|---|

Zero-input homework ingestion | ✅ | ❌ | ❌ | ❌ |

AI-generated quizzes | ✅ | 🔸 | ✅ | ❌ |

Adapts to student mistakes | ✅ | ❌ | ❌ | 🔸 |

Study plan tied to exam date | ✅ | ❌ | 🔸 | ❌ |

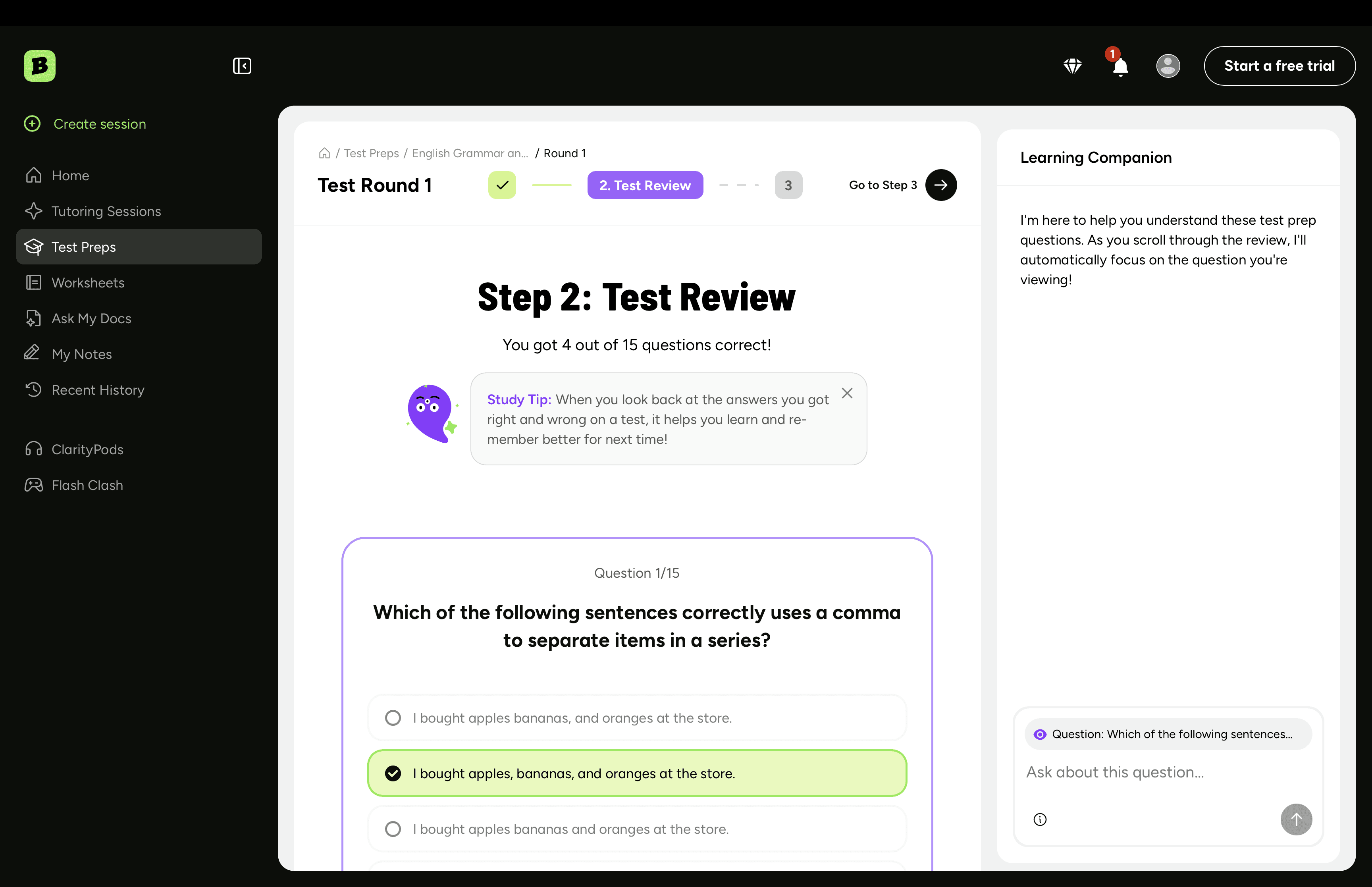

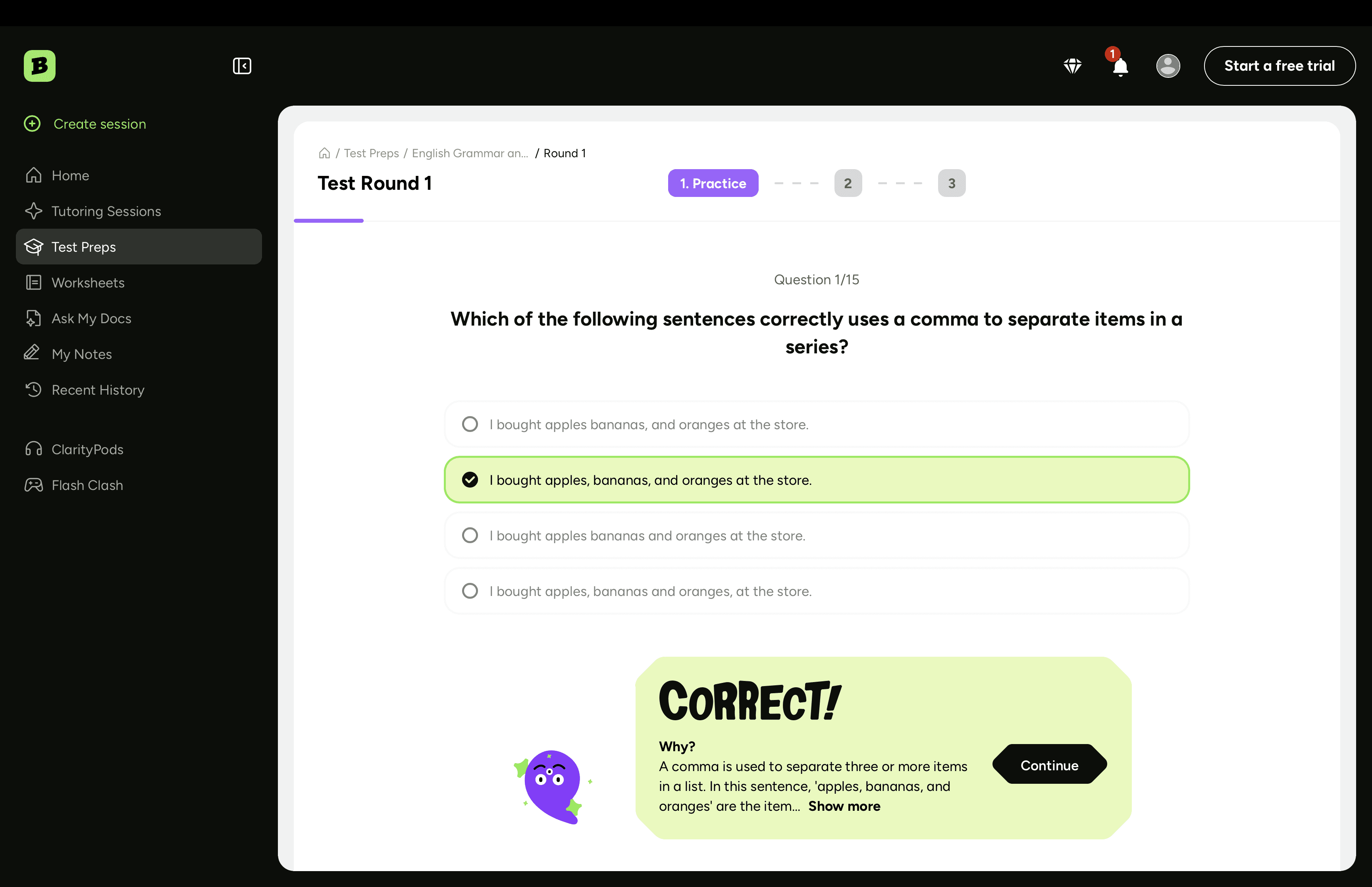

Designing for AI uncertainty

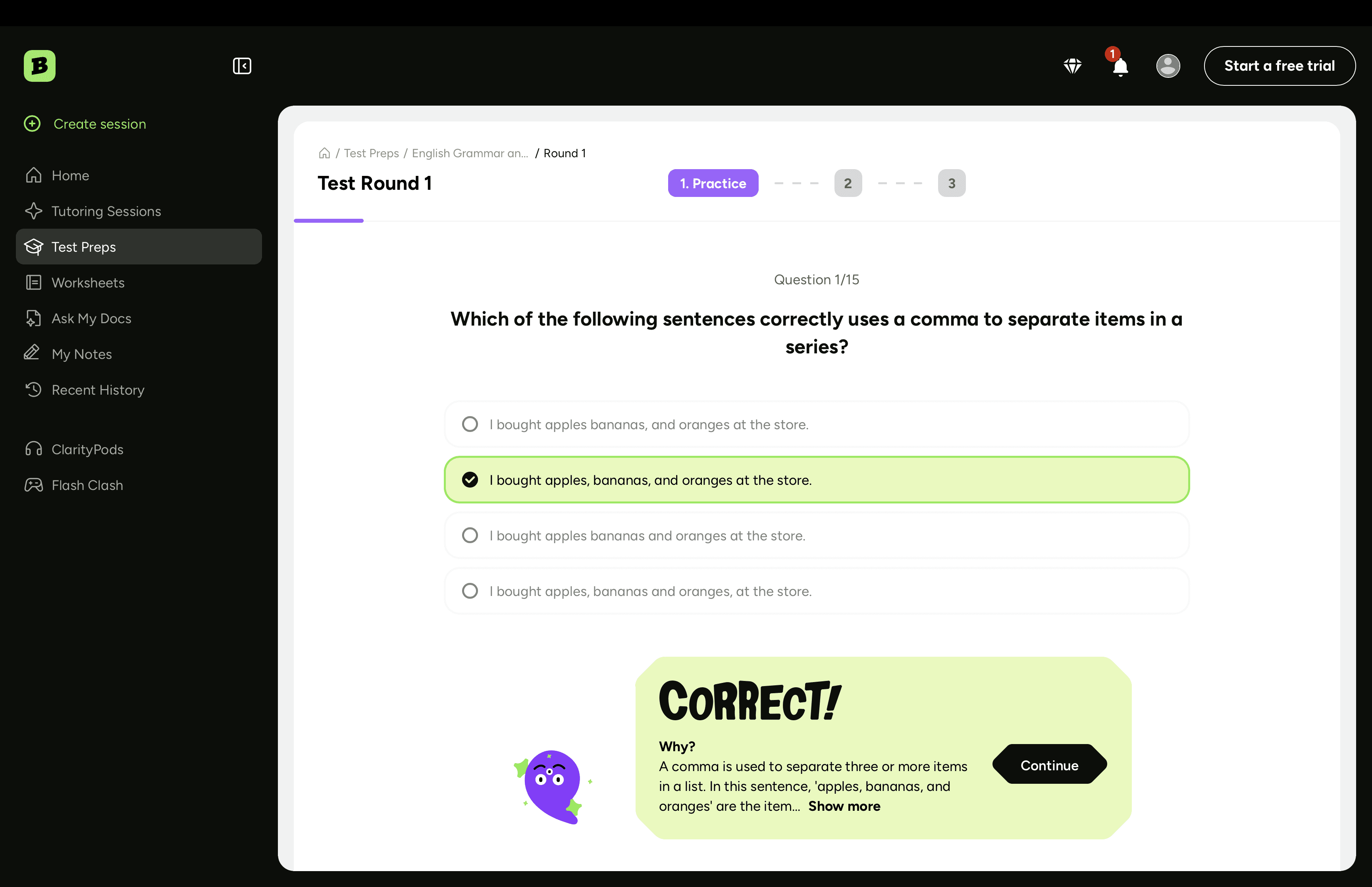

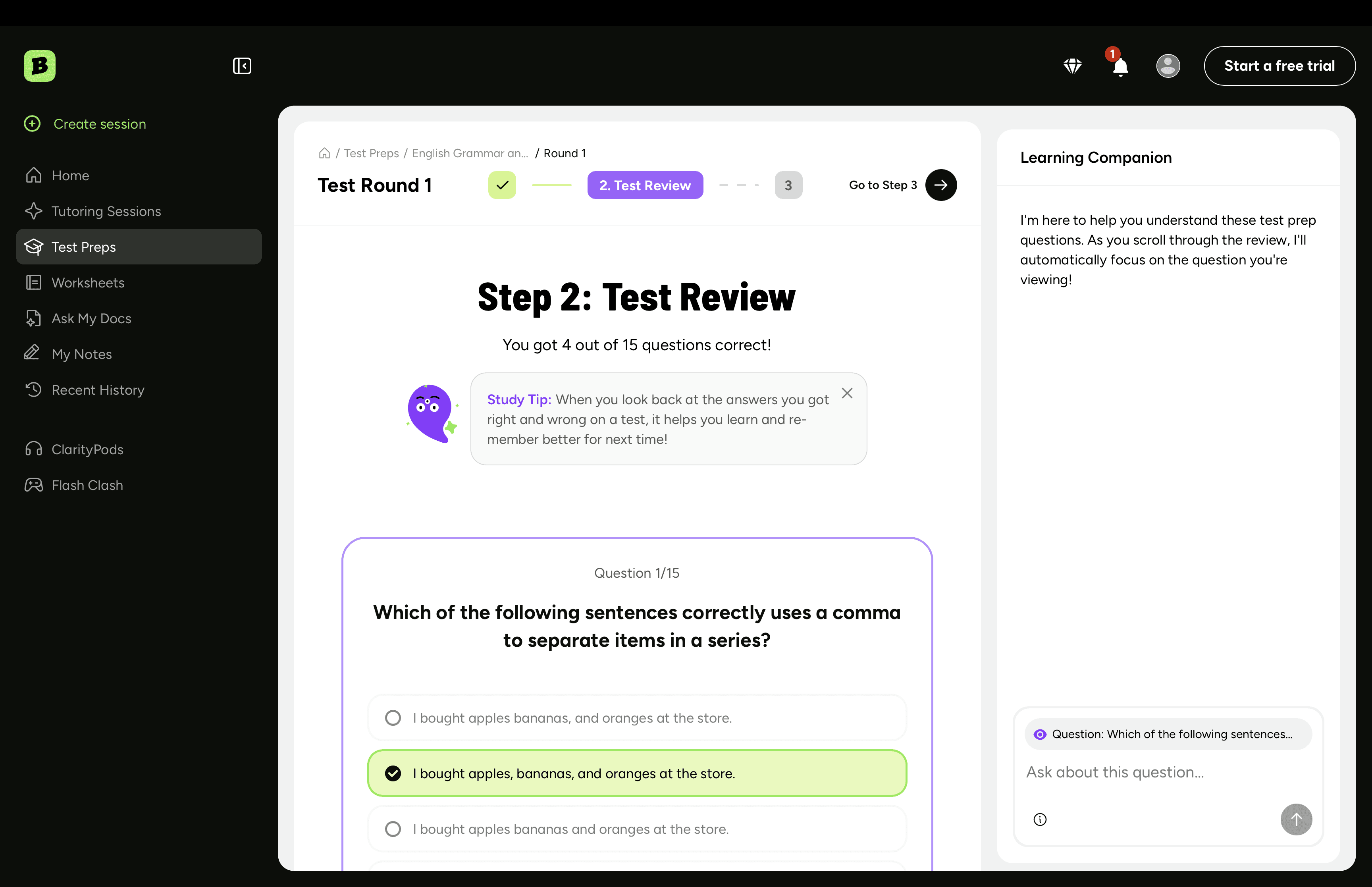

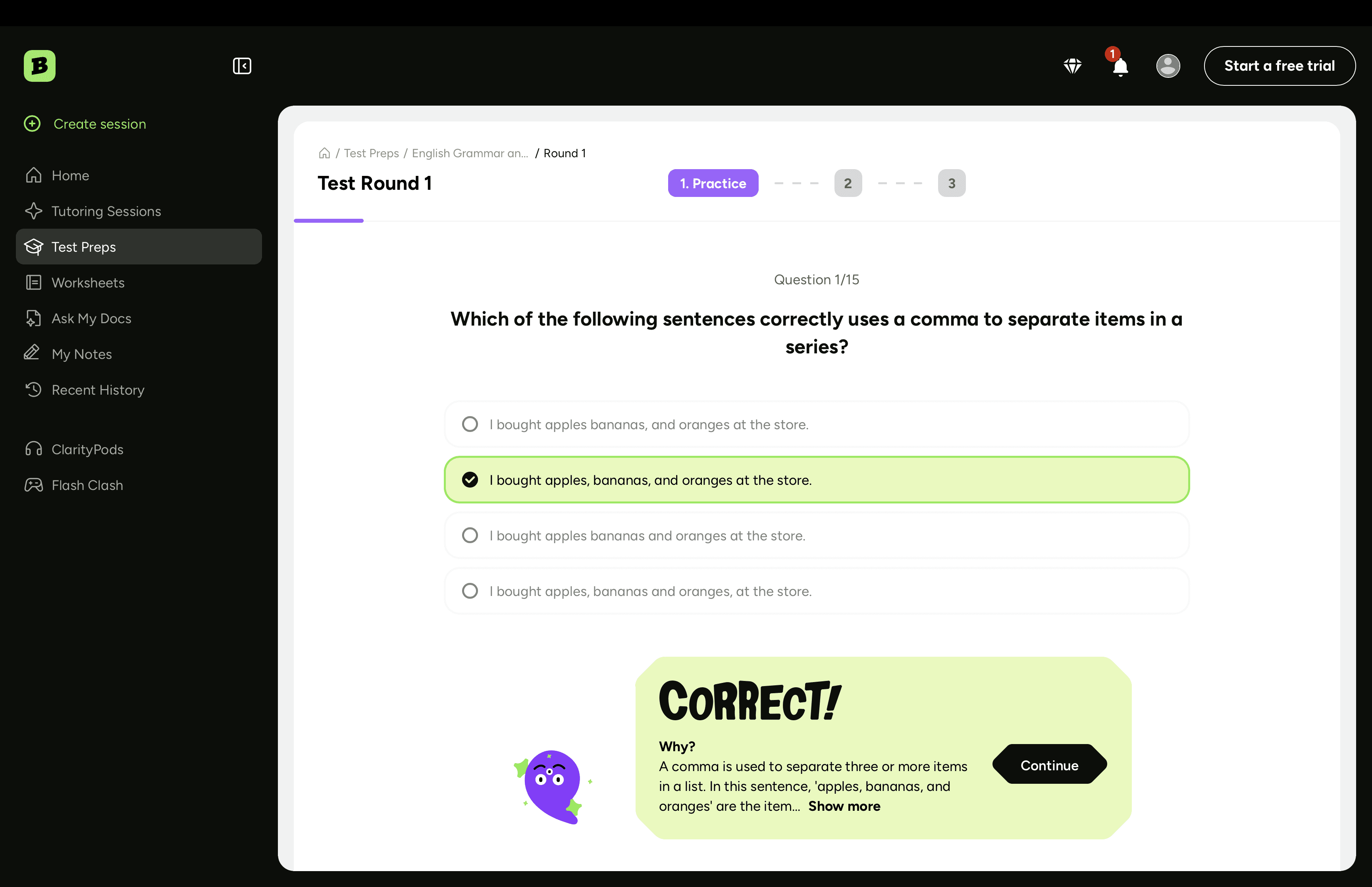

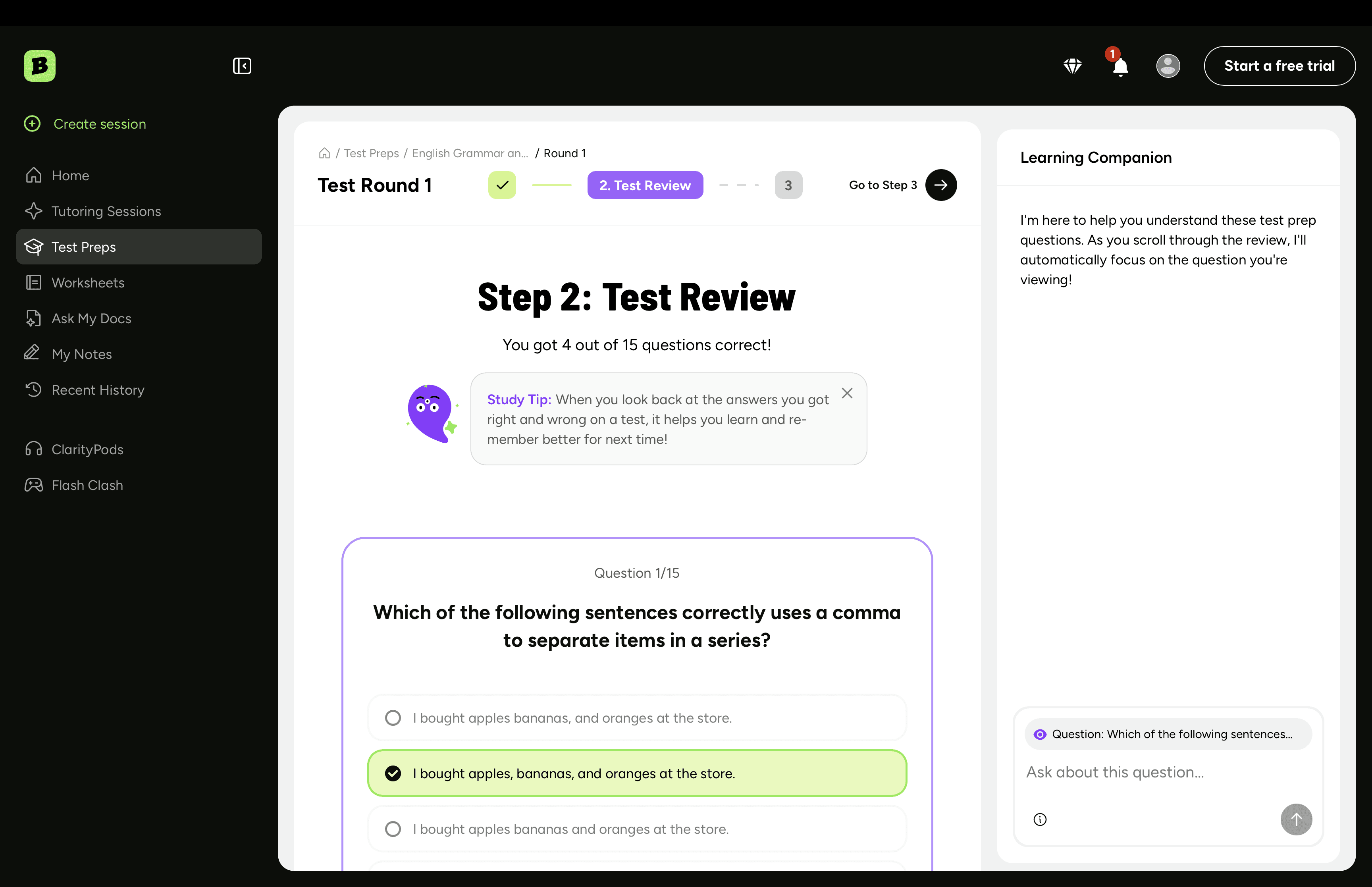

This is where AI product design gets interesting. Model outputs aren't deterministic, and a 7th grader can't spot a hallucinated question. Two principles shaped every screen:

Make AI confidence legible. Every generated question shows topic and difficulty up front, so students know what's being tested.

Always give an out. Every quiz has a "this seems off" flag. Hides the question for that student, feeds back into retraining. Two birds.

The pivot

V1 had separate spaces for homework, quizzes, study plans, and past reviews. Five participants into a 15-person usability test, I had my answer. Students were treating the quiz library and the study plan as different products. One 9th grader said "wait, this is the same thing?" and she was right.

Killed it. Consolidated into a single dashboard organized around upcoming exams, with quizzes nested under the exam they prep for. Next round of testing finished in half the time.

The lesson I keep coming back to: AI features tempt you to expose the machinery. Resist it. Students don't care about the model pipelines. They care about the test on Friday.

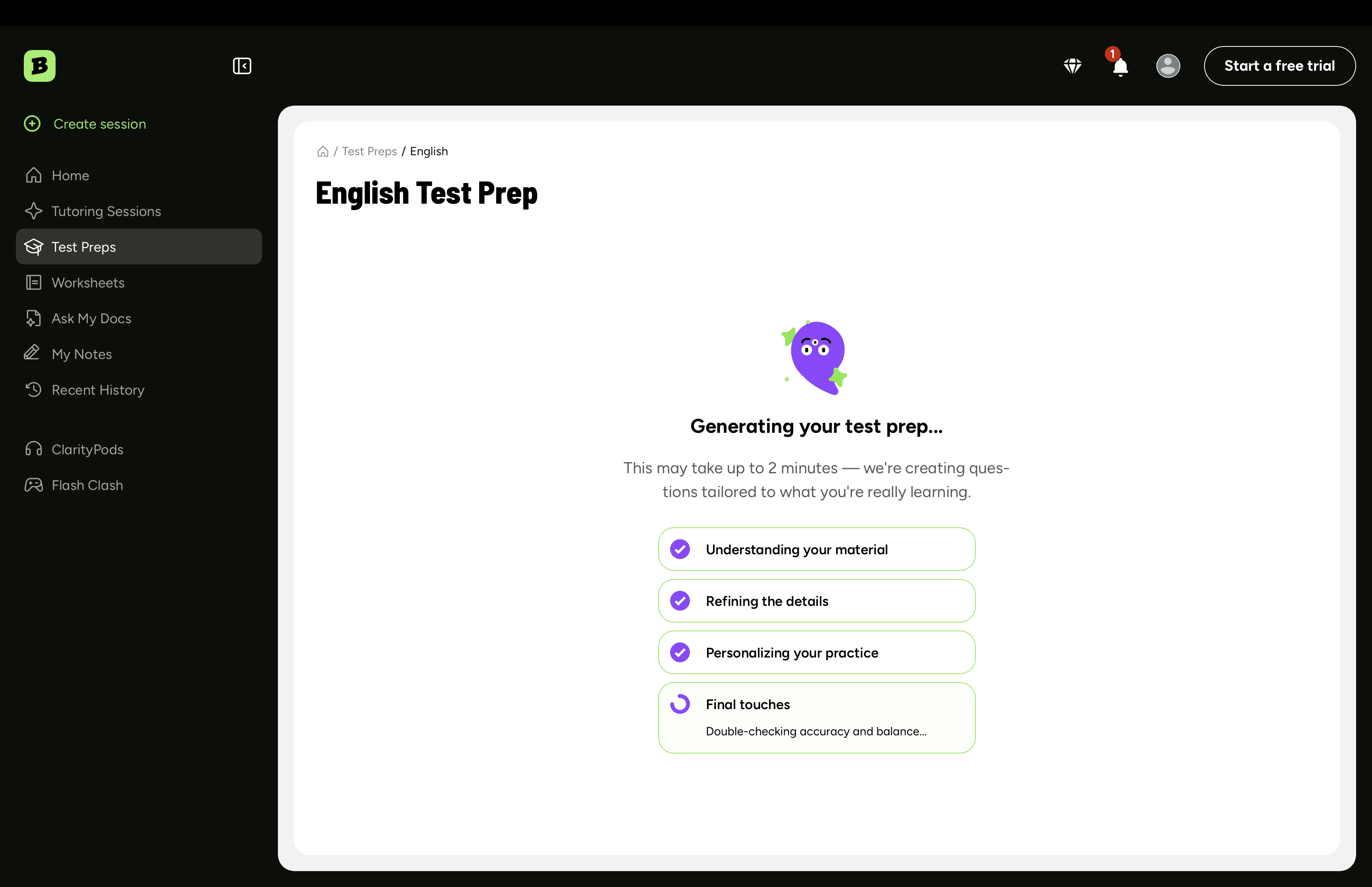

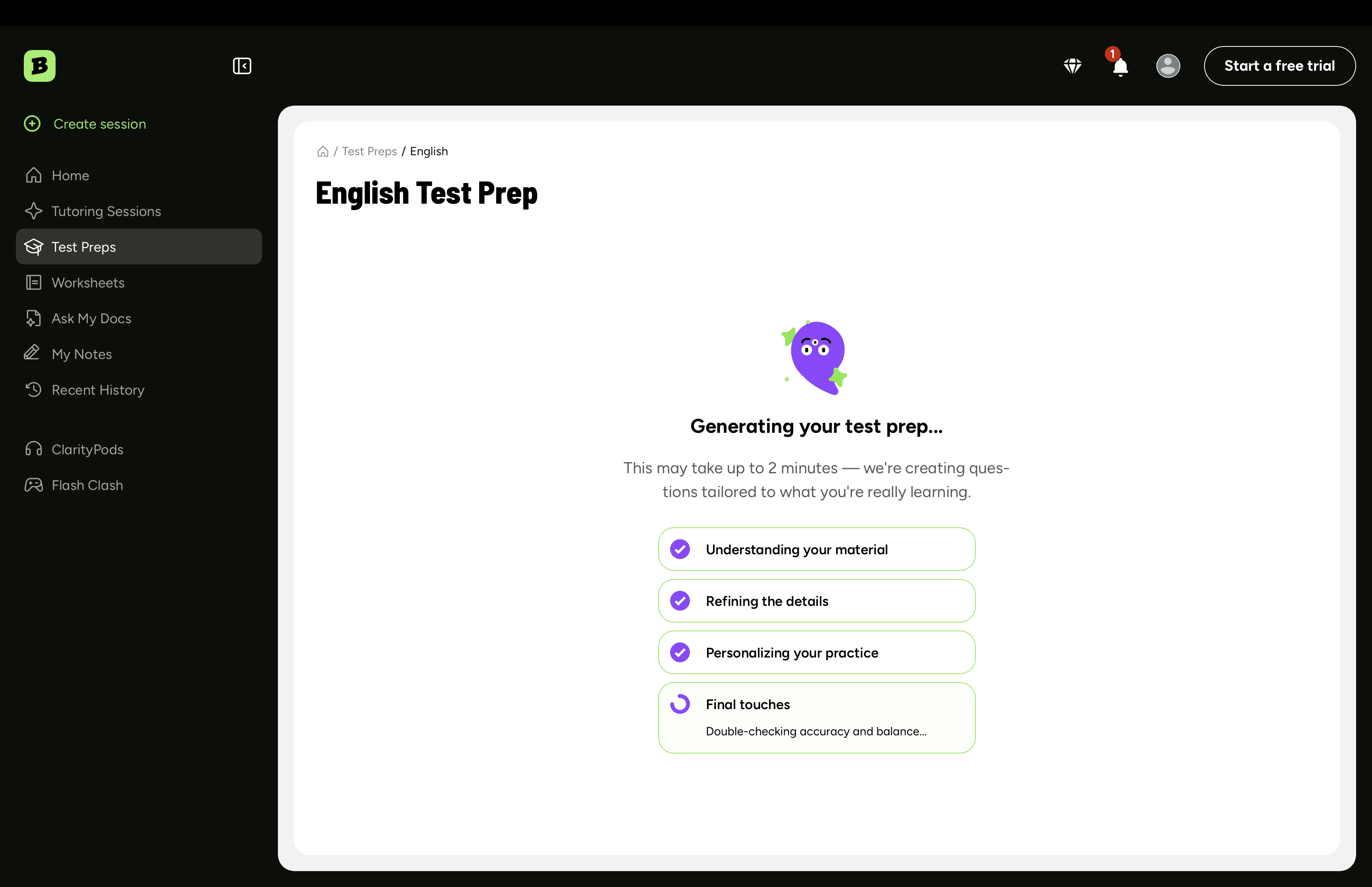

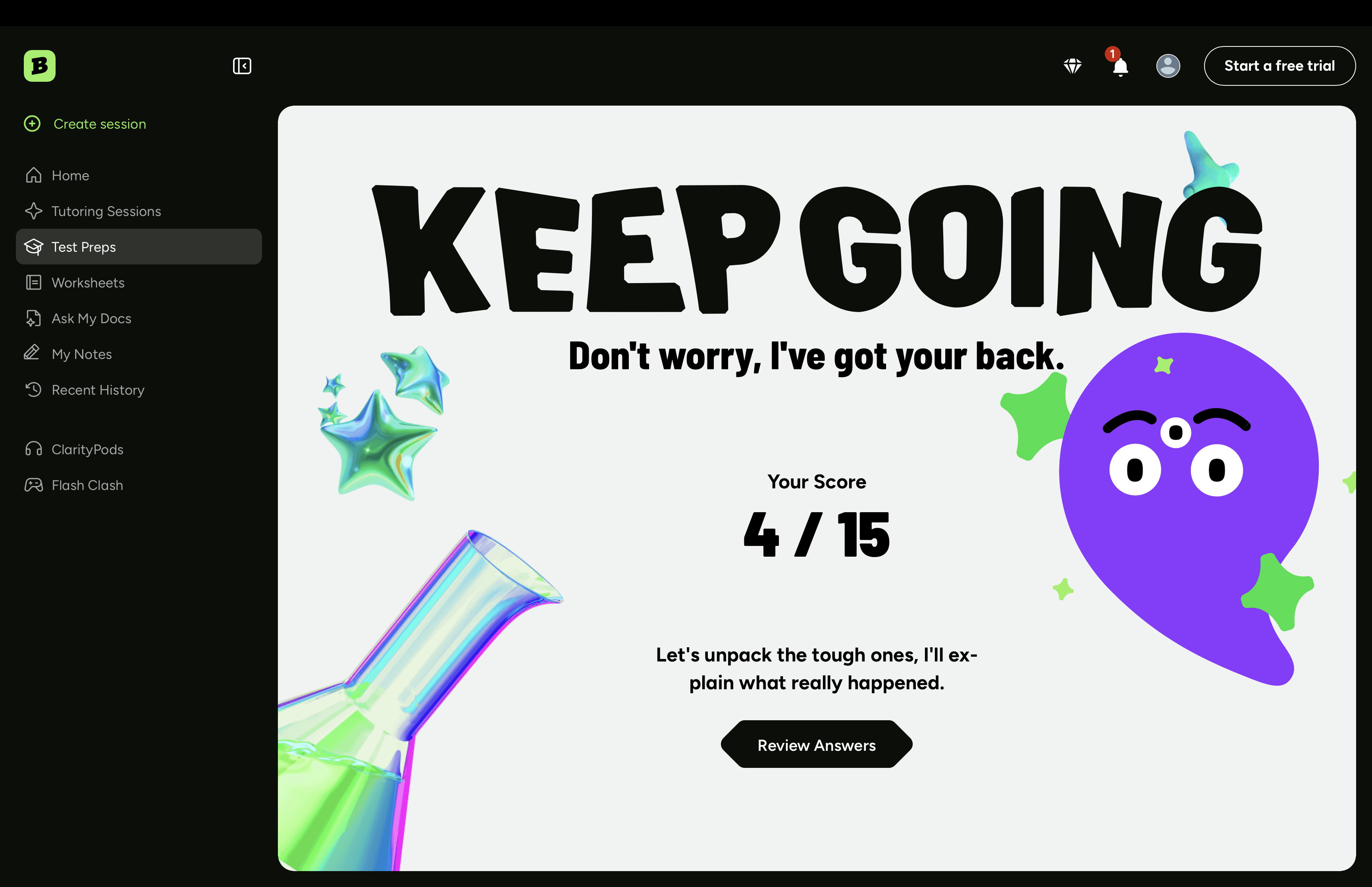

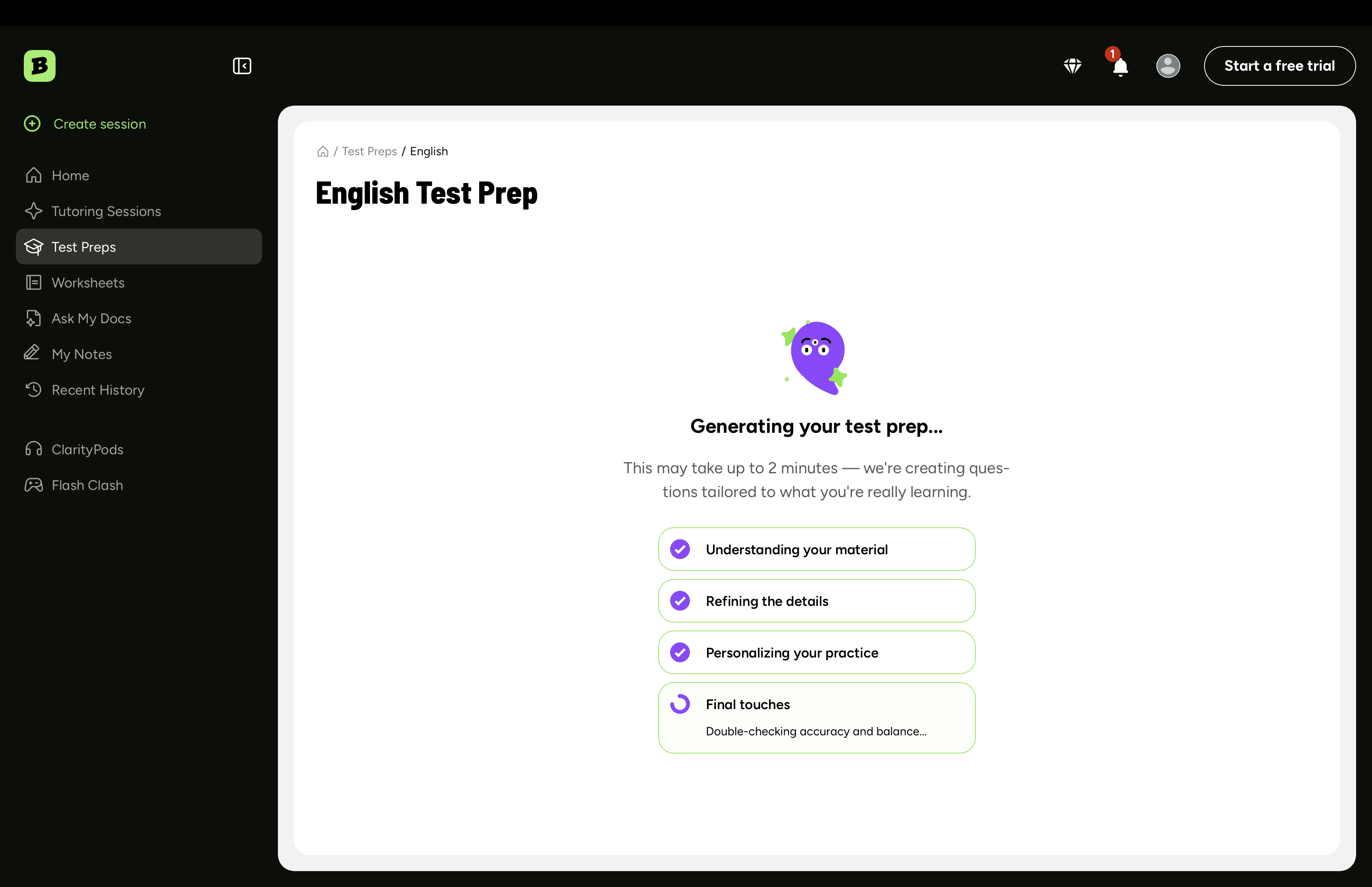

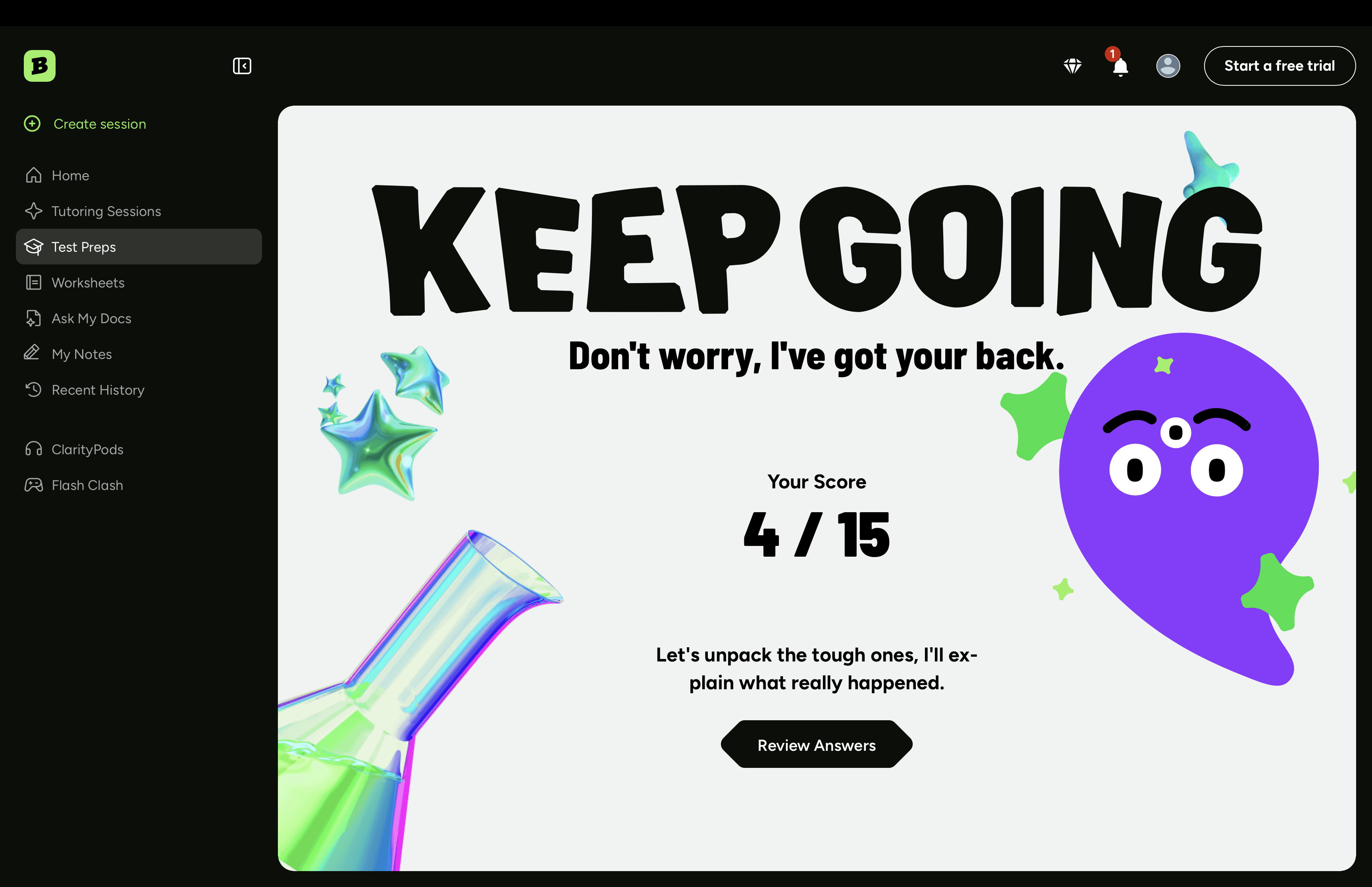

The shipped flow

Homework done in Brainly → tap "Add to Test Prep" → pick exam date → AI generates study plan + practice questions → daily nudges scheduled around the student's school calendar → performance feeds the next session (wrong answers come back harder, mastered topics space out).

Reflections

Empathy is structural, not decorative. When your user is anxious, every design decision either adds to that load or removes it. There's no neutral.

Restraint beats power. The temptation with AI is to show off everything the model can do. The better move is to let the model recede so the student feels competent.

For v2: tighter feedback loop on flagged questions (retraining lagged behind flag volume), and a proper parent surface — they're a real influence on study behavior and we skipped them in v1.

More Projects

UI / UX Design

Brainly AI-Powered Test Prep

Executed a data-driven design strategy by combining qualitative interviews, quantitative surveys, and competitive audits to build a zero-input, adaptive testing engine that reduced prep time by 40%.

Year :

2025

Industry :

EdTech

Employer :

Brainly

Project Duration :

8 weeks

Overview

Students shouldn't have to study how to study.

I led design end-to-end on Brainly Test Prep, an AI feature that turns the homework students already do into a personalized, adaptive study plan. No flashcards to build. No "where do I even start" panic the night before an exam.

First 60 days post-launch:

+30% weekly engagement vs. control

−40% self-reported study setup time

4.5 / 5 average CSAT

(Brainly serves 15M+ MAU, so small UX wins compound fast.)

The problem

Brainly's own research told the story: 28% of US students name exams as their #1 source of school anxiety, and 68% admit they don't give themselves enough time to study. The gap wasn't motivation. It was knowing where to start.

Tools like Quizlet and StudySmarter only made it worse. They assume students will sit down, organize materials, and build their own study set. Cognitive load on top of cognitive load.

Our hypothesis: if we removed the setup work entirely and met students where their materials already lived (Brainly already had their homework and Q&A history), we'd turn studying from a planning problem into a doing problem.

Goals & KPIs

Three things we committed to before any pixels got pushed:

Engagement: +20% weekly returns from Test Prep users vs. non-users

Time-to-first-quiz: under 60 seconds from upload

Satisfaction: ≥ 4.0 CSAT in the first month

Research

20 interviews (ages 13–19) and 500+ surveys. The qual surfaced an emotional layer I hadn't expected — students were carrying expectations from parents, peers, and teachers, and the planning of study time was where it all collapsed. Multiple kids said some version of "I open my notebook and just stare at it."

The quant confirmed it: 80% preferred automation over building their own flashcards, 60% felt overwhelmed during exam season, and 55% specifically asked for daily reminders.

Students didn't want more features. They wanted something to tell them what to do next.

Competitive landscape

A full audit showed a clear white space: nobody had nailed zero-input ingestion plus mistake-driven adaptive review.

Capability | Brainly | Quizlet | StudySmarter | Khan |

|---|---|---|---|---|

Zero-input homework ingestion | ✅ | ❌ | ❌ | ❌ |

AI-generated quizzes | ✅ | 🔸 | ✅ | ❌ |

Adapts to student mistakes | ✅ | ❌ | ❌ | 🔸 |

Study plan tied to exam date | ✅ | ❌ | 🔸 | ❌ |

Designing for AI uncertainty

This is where AI product design gets interesting. Model outputs aren't deterministic, and a 7th grader can't spot a hallucinated question. Two principles shaped every screen:

Make AI confidence legible. Every generated question shows topic and difficulty up front, so students know what's being tested.

Always give an out. Every quiz has a "this seems off" flag. Hides the question for that student, feeds back into retraining. Two birds.

The pivot

V1 had separate spaces for homework, quizzes, study plans, and past reviews. Five participants into a 15-person usability test, I had my answer. Students were treating the quiz library and the study plan as different products. One 9th grader said "wait, this is the same thing?" and she was right.

Killed it. Consolidated into a single dashboard organized around upcoming exams, with quizzes nested under the exam they prep for. Next round of testing finished in half the time.

The lesson I keep coming back to: AI features tempt you to expose the machinery. Resist it. Students don't care about the model pipelines. They care about the test on Friday.

The shipped flow

Homework done in Brainly → tap "Add to Test Prep" → pick exam date → AI generates study plan + practice questions → daily nudges scheduled around the student's school calendar → performance feeds the next session (wrong answers come back harder, mastered topics space out).

Reflections

Empathy is structural, not decorative. When your user is anxious, every design decision either adds to that load or removes it. There's no neutral.

Restraint beats power. The temptation with AI is to show off everything the model can do. The better move is to let the model recede so the student feels competent.

For v2: tighter feedback loop on flagged questions (retraining lagged behind flag volume), and a proper parent surface — they're a real influence on study behavior and we skipped them in v1.

More Projects

UI / UX Design

Brainly AI-Powered Test Prep

Executed a data-driven design strategy by combining qualitative interviews, quantitative surveys, and competitive audits to build a zero-input, adaptive testing engine that reduced prep time by 40%.

Year :

2025

Industry :

EdTech

Employer :

Brainly

Project Duration :

8 weeks

Overview

Students shouldn't have to study how to study.

I led design end-to-end on Brainly Test Prep, an AI feature that turns the homework students already do into a personalized, adaptive study plan. No flashcards to build. No "where do I even start" panic the night before an exam.

First 60 days post-launch:

+30% weekly engagement vs. control

−40% self-reported study setup time

4.5 / 5 average CSAT

(Brainly serves 15M+ MAU, so small UX wins compound fast.)

The problem

Brainly's own research told the story: 28% of US students name exams as their #1 source of school anxiety, and 68% admit they don't give themselves enough time to study. The gap wasn't motivation. It was knowing where to start.

Tools like Quizlet and StudySmarter only made it worse. They assume students will sit down, organize materials, and build their own study set. Cognitive load on top of cognitive load.

Our hypothesis: if we removed the setup work entirely and met students where their materials already lived (Brainly already had their homework and Q&A history), we'd turn studying from a planning problem into a doing problem.

Goals & KPIs

Three things we committed to before any pixels got pushed:

Engagement: +20% weekly returns from Test Prep users vs. non-users

Time-to-first-quiz: under 60 seconds from upload

Satisfaction: ≥ 4.0 CSAT in the first month

Research

20 interviews (ages 13–19) and 500+ surveys. The qual surfaced an emotional layer I hadn't expected — students were carrying expectations from parents, peers, and teachers, and the planning of study time was where it all collapsed. Multiple kids said some version of "I open my notebook and just stare at it."

The quant confirmed it: 80% preferred automation over building their own flashcards, 60% felt overwhelmed during exam season, and 55% specifically asked for daily reminders.

Students didn't want more features. They wanted something to tell them what to do next.

Competitive landscape

A full audit showed a clear white space: nobody had nailed zero-input ingestion plus mistake-driven adaptive review.

Capability | Brainly | Quizlet | StudySmarter | Khan |

|---|---|---|---|---|

Zero-input homework ingestion | ✅ | ❌ | ❌ | ❌ |

AI-generated quizzes | ✅ | 🔸 | ✅ | ❌ |

Adapts to student mistakes | ✅ | ❌ | ❌ | 🔸 |

Study plan tied to exam date | ✅ | ❌ | 🔸 | ❌ |

Designing for AI uncertainty

This is where AI product design gets interesting. Model outputs aren't deterministic, and a 7th grader can't spot a hallucinated question. Two principles shaped every screen:

Make AI confidence legible. Every generated question shows topic and difficulty up front, so students know what's being tested.

Always give an out. Every quiz has a "this seems off" flag. Hides the question for that student, feeds back into retraining. Two birds.

The pivot

V1 had separate spaces for homework, quizzes, study plans, and past reviews. Five participants into a 15-person usability test, I had my answer. Students were treating the quiz library and the study plan as different products. One 9th grader said "wait, this is the same thing?" and she was right.

Killed it. Consolidated into a single dashboard organized around upcoming exams, with quizzes nested under the exam they prep for. Next round of testing finished in half the time.

The lesson I keep coming back to: AI features tempt you to expose the machinery. Resist it. Students don't care about the model pipelines. They care about the test on Friday.

The shipped flow

Homework done in Brainly → tap "Add to Test Prep" → pick exam date → AI generates study plan + practice questions → daily nudges scheduled around the student's school calendar → performance feeds the next session (wrong answers come back harder, mastered topics space out).

Reflections

Empathy is structural, not decorative. When your user is anxious, every design decision either adds to that load or removes it. There's no neutral.

Restraint beats power. The temptation with AI is to show off everything the model can do. The better move is to let the model recede so the student feels competent.

For v2: tighter feedback loop on flagged questions (retraining lagged behind flag volume), and a proper parent surface — they're a real influence on study behavior and we skipped them in v1.